Ubuntu Global Jam Oneiric

Mon, 05/09/2011 - 7:22pm — tumbleweedWe had quite a successful Ubuntu Global Jam on Saturday. Thanks Yola for hosting us. It was only 5 of us, and I was the only one with any uploads under my belt, but the nice small team meant I had time to help everyone fix some build failures. We had intended to focus on Scientific packages, but it was easier to just pick arbitrary build failures from the most recent archive rebuild.

Maia visited us briefly at lunch time and took some photos, thanks :) Sorry you couldn’t stay longer.

Some stats from the jam

Examples that we fixed together:

I think that’s pretty good for a day’s work :P

In other news

Eep, haven’t blogged an ages. Since I last blogged, I’ve become a Debian Developer, made an Ibid point release, been to (my first) UDS and a Debconf (which were both fantastic, and very different), spent a week sailing in Croatia with my brother, and been pulled into the Ubuntu release team. All this has meant little progress in my MSc, though :/

Release Party Ubuntu Mirror

Sat, 09/10/2010 - 9:37pm — tumbleweedOur Ubuntu LoCo release parties always end up being part-install-fest. Even when we used to meet at pubs in the early days, people would pull out laptops to burn ISOs for each other and get assistance with upgrades.

As the maintainer of a local university mirror, I took along a mini-mirror to our Lucid Release party and will be doing it for the Maverick party tomorrow. If anyone wants to do this at future events, it's really not that hard to organise, you just need the bandwidth to create the mirror. Disk space requirements (very rough, per architecture, per release): package mirror 50GiB, Ubuntu/Kubuntu CDs 5GiB, Xubuntu/Mythbuntu/UbuntuStudio CDs 5GiB, Ubuntu/Kubuntu/Edubuntu DVDs: 10GiB.

I took the full contents of our ubuntu-archive mirror, but you can probably get away with only i386 and amd64 for the new release people are installing and any old ones they might be upgrading from. You can easily create a partial (only selected architectures and releases) Ubuntu mirror using apt-mirror or debmirror. It takes a while on the first run, but once you have a mirror, updating it is quite efficient.

The CD and DVD repos can easily be mirrored with rsync. Something like --include '*10.04*'.iso' --exclude '*.iso' will give you a quick and dirty partial mirror.

As to the network. I took a 24port switch and a pile of flyleads. A laptop with 1TB external hard drive ran the mirror. At this point, you pick between providing Internet access as well (which may result in some poorly-configured machines upgrading over the Internet) or doing it all offline (which makes sense in bandwidth-starved South Africa). For lucid, I chose to run this on a private network - there was a separate WiFi network for Internet access. This slightly complicates upgrades because update-manager only shows the update button when it can connect to changelogs.ubuntu.com, but that's easily worked around:

for file in meta-release meta-release-development meta-release-lts meta-release-lts-development meta-release-lts-proposed meta-release-proposed meta-release-unit-testing; do

wget -nv -N "http://changelogs.ubuntu.com/$file"

done

Instead of getting people to reconfigure their APT sources (and having to modify those meta-release files), we set our DNS server (dnsmasq) to point all the mirrors that people might be using to itself. In /etc/hosts:

Dnsmasq must be told to provide DHCP leases /etc/dnsmasq.conf:

:

dhcp-range=10.0.0.2,10.0.0.255,12h

dhcp-authoritative

Then we ran an Apache (all on the default virtualhost) serving the Ubuntu archive mirror as /ubuntu, the meta-release files in the root, and CDs / DVDs in /ubuntu-releases, /ubuntu-cdimage. There were a couple of other useful extras thrown in.

We could have also run ftp and rsync servers, and provided a netboot environment. But there was a party to be had :)

For the maverick party, I used this script to prepare the mirror. It's obviously very specific to my local mirror. Tweak to taste:

set -e

set -u

MIRROR=ftp.leg.uct.ac.za

MEDIA=/media/external/ubu

export GNUPGHOME="$MEDIA/gnupg"

gpg --no-default-keyring --keyring trustedkeys.gpg --keyserver keyserver.ubuntu.com --recv-keys 40976EAF437D05B5 2EBC26B60C5A2783

debmirror --host $MIRROR --root ubuntu --method rsync \

--rsync-options="-aIL --partial --no-motd" -p --i18n --getcontents \

--section main,universe,multiverse,restricted \

--dist $(echo {lucid,maverick}{,-security,-updates,-backports,-proposed} | tr ' ' ,) \

--arch i386,amd64 \

$MEDIA/ubuntu/

debmirror --host $MIRROR --root medibuntu --method rsync \

--rsync-options="-aL --partial --no-motd" -p --i18n --getcontents \

--section free,non-free \

--dist lucid,maverick \

--arch i386,amd64 \

$MEDIA/medibuntu/

rsync -aHvP --no-motd --delete \

rsync://$MIRROR/pub/packages/corefonts/ \

$MEDIA/corefonts/

rsync -aHvP --no-motd --delete \

rsync://$MIRROR/pub/linux/ubuntu-changelogs/ \

$MEDIA/ubuntu-changelogs/

rsync -aHvP --no-motd \

rsync://$MIRROR/pub/linux/ubuntu-releases/ \

--include 'ubuntu-10.10*' --include 'ubuntu-10.04*' \

--exclude '*.iso' --exclude '*.template' --exclude '*.jigdo' --exclude '*.list' --exclude '*.zsync' --exclude '*.img' --exclude '*.manifest' \

$MEDIA/ubuntu-releases/

rsync -aHvP --no-motd \

rsync://$MIRROR/pub/linux/ubuntu-dvd/ \

--exclude 'gutsy' --exclude 'hardy' --exclude 'jaunty' --exclude 'karmic' \

$MEDIA/ubuntu-cdimage/

We'll see how it works out tomorrow. Looking forward to a good party.

Fun with Squid and CDNs

Wed, 18/02/2009 - 12:29pm — tumbleweedOne neat upgrade in Debian's recent 5.0.0 release1 was Squid 2.7. In this bandwidth-starved corner of the world, a caching proxy is a nice addition to a network, as it should shave at least 10% off your monthly bandwidth usage. However, the recent rise of CDNs has made many objects that should be highly cacheable, un-cacheable.

For example, a YouTube video has a static ID. The same piece of video will always have the same ID, it'll never be replaced by anything else (except a "sorry this is no longer available" notice). But it's served from one of many delivery servers. If I watch it once, it may come from

But the next time it may come from v15.cache.googlevideo.com. And that's not all, the signature parameter is unique (to protect against hot-linking) as well as other not-static parameters.

Basically, any proxy will probably refuse to cache it (because of all the parameters) and if it did, it'd be a waste of space because the signature would ensure that no one would ever access that cached item again.

I came across a page on the squid wiki that addresses a solution to this.

Squid 2.7 introduces the concept of a storeurl_rewrite_program which gets a chance to rewrite any URL before storing / accessing an item in the cache. Thus we could rewrite our example file to

We've normalised the URL and kept the only two parameters that matter, the video id and the itag which specifies the video quality level.

The squid wiki page I mentioned includes a sample perl script to perform this rewrite. They don't include the itag, and my perl isn't good enough to fix that without making a dog's breakfast of it, so I re-wrote it in Python. You can find it at the end of this post. Each line the rewrite program reads contains a concurrency ID, the URL to be rewritten, and some parameters. We output the concurrency ID and the URL to rewrite to.

The concurrency ID is a way to use a single script to process rewrites from different squid threads in parallel. The documentation is this is almost non-existant, but if you specify a non-zero storeurl_rewrite_concurrency each request and response will be prepended with a numeric ID. The perl script concatenated this directly before the re-written URL, but I separate them with a space. Both seem to work. (Bad documentation sucks)

All that's left is to tell Squid to use this, and to override the caching rules on these URLs.

storeurl_rewrite_children 1

storeurl_rewrite_concurrency 10

# The keyword for all youtube video files are "get_video?", "videodownload?" and "videoplaybeck?id"

# The "\.(jp(e?g|e|2)|gif|png|tiff?|bmp|ico|flv)\?" is only for pictures and other videos

acl store_rewrite_list urlpath_regex \/(get_video\?|videodownload\?|videoplayback\?id) \.(jp(e?g|e|2)|gif|png|tiff?|bmp|ico|flv)\? \/ads\?

acl store_rewrite_list_web url_regex ^http:\/\/([A-Za-z-]+[0-9]+)*\.[A-Za-z]*\.[A-Za-z]*

acl store_rewrite_list_path urlpath_regex \.(jp(e?g|e|2)|gif|png|tiff?|bmp|ico|flv)$

acl store_rewrite_list_web_CDN url_regex ^http:\/\/[a-z]+[0-9]\.google\.com doubleclick\.net

# Rewrite youtube URLs

storeurl_access allow store_rewrite_list

# this is not related to youtube video its only for CDN pictures

storeurl_access allow store_rewrite_list_web_CDN

storeurl_access allow store_rewrite_list_web store_rewrite_list_path

storeurl_access deny all

# Default refresh_patterns

refresh_pattern ^ftp: 1440 20% 10080

refresh_pattern ^gopher: 1440 0% 1440

refresh_pattern -i (/cgi-bin/|\?) 0 0% 0

# Updates (unrelated to this post, but useful settings to have):

refresh_pattern windowsupdate.com/.*\.(cab|exe)(\?|$) 518400 100% 518400 reload-into-ims

refresh_pattern update.microsoft.com/.*\.(cab|exe)(\?|$) 518400 100% 518400 reload-into-ims

refresh_pattern download.microsoft.com/.*\.(cab|exe)(\?|$) 518400 100% 518400 reload-into-ims

refresh_pattern (Release|Package(.gz)*)$ 0 20% 2880

refresh_pattern \.deb$ 518400 100% 518400 override-expire

# Youtube:

refresh_pattern -i (get_video\?|videodownload\?|videoplayback\?) 161280 50000% 525948 override-expire ignore-reload

# Other long-lived items

refresh_pattern -i \.(jp(e?g|e|2)|gif|png|tiff?|bmp|ico|flv)(\?|$) 161280 3000% 525948 override-expire reload-into-ims

refresh_pattern . 0 20% 4320

# All of the above can cause a redirect loop when the server

# doesn't send a "Cache-control: no-cache" header with a 302 redirect.

# This is a work-around.

minimum_object_size 512 bytes

Done. And it seems to be working relatively well. If only I'd set this up last year when I had pesky house-mates watching youtube all day ;-)

It should of course be noted that doing this instructs your Squid Proxy to break rules.

Both override-expire and ignore-reload violate guarantees that the HTTP standards provide the browser and web-server about their communication with each other.

They are relatively benign changes, but illegal nonetheless.

And it goes without saying that rewriting the URLs of stored objects could cause some major breakage by assuming that different objects (with different URLs) are the same. The provided regexes seem sane enough to not assume that this won't happen, but YMMV.

# vim:et:ts=4:sw=4:

import re

import sys

import urlparse

youtube_getvid_res = [

re.compile(r"^http:\/\/([A-Za-z]*?)-(.*?)\.(.*)\.youtube\.com\/get_video\?video_id=(.*?)&(.*?)$"),

re.compile(r"^http:\/\/(.*?)\/get_video\?video_id=(.*?)&(.*?)$"),

re.compile(r"^http:\/\/(.*?)video_id=(.*?)&(.*?)$"),

]

youtube_playback_re = re.compile(r"^http:\/\/(.*?)\/videoplayback\?id=(.*?)&(.*?)$")

others = [

(re.compile(r"^http:\/\/(.*?)\/(ads)\?(?:.*?)$"), "http://%s/%s"),

(re.compile(r"^http:\/\/(?:.*?)\.yimg\.com\/(?:.*?)\.yimg\.com\/(.*?)\?(?:.*?)$"), "http://cdn.yimg.com/%s"),

(re.compile(r"^http:\/\/(?:(?:[A-Za-z]+[0-9-.]+)*?)\.(.*?)\.(.*?)\/(.*?)\.(.*?)\?(?:.*?)$"), "http://cdn.%s.%s.SQUIDINTERNAL/%s.%s"),

(re.compile(r"^http:\/\/(?:(?:[A-Za-z]+[0-9-.]+)*?)\.(.*?)\.(.*?)\/(.*?)\.(.{3,5})$"), "http://cdn.%s.%s.SQUIDINTERNAL/%s.%s"),

(re.compile(r"^http:\/\/(?:(?:[A-Za-z]+[0-9-.]+)*?)\.(.*?)\.(.*?)\/(.*?)$"), "http://cdn.%s.%s.SQUIDINTERNAL/%s"),

(re.compile(r"^http:\/\/(.*?)\/(.*?)\.(jp(?:e?g|e|2)|gif|png|tiff?|bmp|ico|flv)\?(?:.*?)$"), "http://%s/%s.%s"),

(re.compile(r"^http:\/\/(.*?)\/(.*?)\;(?:.*?)$"), "http://%s/%s"),

]

def parse_params(url):

"Convert a URL's set of GET parameters into a dictionary"

params = {}

for param in urlparse.urlsplit(url)[3].split("&"):

if "=" in param:

n, p = param.split("=", 1)

params[n] = p

return params

while True:

line = sys.stdin.readline()

if line == "":

break

try:

channel, url, other = line.split(" ", 2)

matched = False

for re in youtube_getvid_res:

if re.match(url):

params = parse_params(url)

if "fmt" in params:

print channel, "http://video-srv.youtube.com.SQUIDINTERNAL/get_video?video_id=%s&fmt=%s" % (params["video_id"], params["fmt"])

else:

print channel, "http://video-srv.youtube.com.SQUIDINTERNAL/get_video?video_id=%s" % params["video_id"]

matched = True

break

if not matched and youtube_playback_re.match(url):

params = parse_params(url)

if "itag" in params:

print channel, "http://video-srv.youtube.com.SQUIDINTERNAL/videoplayback?id=%s&itag=%s" % (params["id"], params["itag"])

else:

print channel, "http://video-srv.youtube.com.SQUIDINTERNAL/videoplayback?id=%s" % params["id"]

matched = True

if not matched:

for re, pattern in others:

m = re.match(url)

if m:

print channel, pattern % m.groups()

matched = True

break

if not matched:

print channel, url

except Exception:

# For Debugging only. In production we want this to never die.

#raise

print line

sys.stdout.flush()

Ravioli and More Electrical Disasters

Tue, 23/12/2008 - 10:12pm — tumbleweedThis afternoon, I went to my parents' house to make Pasta for the Christmas Eve dinner. It's one of the few family traditions we have; almost every year we dust the dining room table down with flour, get out the pasta rolling machines, and make a few hundred ravioli. It takes the best part of an afternoon, you have to work quite fast, and the final product doesn't keep very well. They need to be stored separated on floured trays, and turned twice every day to avoid sticking to each other or the trays. However, they should taste delicious tomorrow night. Some past photos.

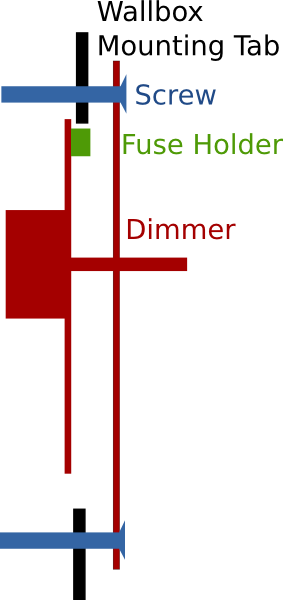

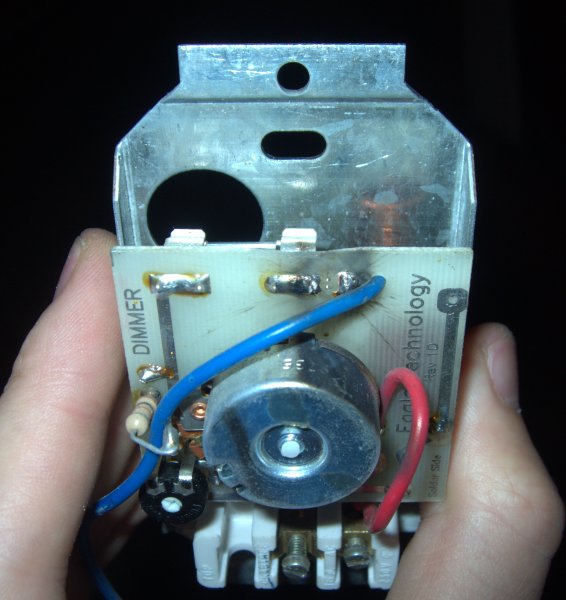

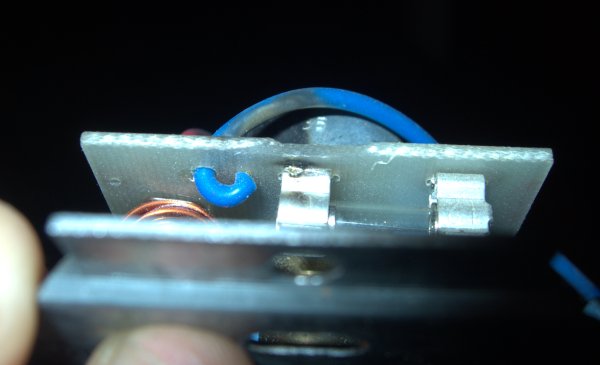

In-between making ravioli (and fixing computers), I was dispatched to fix the broken light above our work table. As suspected, it was the dimmer fuse that had blown (in fact, decimated). So, in with a new one, and I had to re-mount the dimmer. I could see that the dimmer was terribly designed — the top mounting screw comes really close to the circuit board, and right at the edge of the board is the un-insulated fuse holder. This got me mumbling something about bad design, and very gingerly using insulated tools on the screw. Here's a diagram:

Obviously, during the mounting, the mounting tab will come really close to the board and fuse holder. Naturally, that happened, and there was a very loud bang. The circuit board trace next to the holder had burned through. Hmph. How one earth did they get this certified as safe for home use?

I suppose it's also another reminder not to work on live circuits. I've had enough of those reminders in my life...

smartmontools

Wed, 08/10/2008 - 5:20pm — tumbleweedIn line with my SysRq Post comes another bit of assumed knowledge, SMART. Let’s begin at the beginning (and stick to the PC world).

Magnetic storage is fault-prone. In the old days, when you formatted a drive, part of the formatting process was to check that each block seemed to be able to store data. All the bad blocks would be listed in a “bad block list” and the file-system would never try to use them. File-system checks would also be able to mark blocks as being bad.

As disks got bigger, this meant that formatting could take hours. Drives had also become fancy enough that they could manage bad blocks themselves, and so a shift occured. Disks were shipped with some extra spare space that the computer can’t see. Should the drive controller detect that a block went bad (they had parity checks), it could re-allocate a block from the extra space to stand in for the bad block. If it was able to recover the data from the bad block, this could be totally transparent to the file-system, if not the file-system would see a read-error and have to handle it.

This is where we are today. File-systems still support the concept of bad blocks, but in practice they only occur when a disk runs out of spare blocks.

This came with a problem, how would you know if a disk was doing ok or not? Well a standard was created called SMART. This allows you to talk to the drive controller and (amongst other things) find out the state of the disk. On Linux, we do this via the package smartmontools.

Why is this useful? Well you can ask the disk to run a variety of tests (including a full bad block scan), these are useful for RMAing a bad drive with minimum hassle. You can also get the drive’s error-log which can give you some indication of it’s reliability. You can see it’s temperature, age, and Serial Number (useful when you have to know which drive to unplug). But, most importantly, you can find out the state of bad sectors. How many sectors does the drive think are bad, and how many has it reallocated.

Why is that useful?

In the event of a bad block, you can manually force a re-allocation. This way it happens under your terms, and you’ll know exactly what got corrupted.

Next, Google published a paper linking non-zero bad sector values to drive failure. Do you really want be trusting known-non-trustworthy drives with critical data?

Finally, there is a nasty RAID situation. If you have a RAID-5 array with say 6 drives in it and one fails either the RAID system will automatically select a spare drive (if it has one), or you’ll have to replace it. The system will then re-build on the new disk, reading every sector on all the other disks, to calculate the sector contents for the new disk. If one of those reads fails (bad sector) you’ll now be up shit-creek without a paddle. The RAID system will kick out the disk with the read failure, and you’ll have a RAID-5 array with two bad disks in it — one more than RAID-5 can handle. There are tricks to get such a RAID-5 array back online, and I’ve done it, but you will have corruption, and it’s risky as hell.

So, before you go replacing RAID-5 member-disks, check the SMART status of all the other disks.

Personally, I get twitchy when any of my drives have bad sectors. I have smartd monitoring them, and I’ll attempt to RMA them as soon as a sector goes bad.

Recent comments

12 years 45 weeks ago

12 years 46 weeks ago

13 years 1 week ago

13 years 4 weeks ago

13 years 14 weeks ago

13 years 16 weeks ago

13 years 17 weeks ago

13 years 17 weeks ago

13 years 27 weeks ago

13 years 41 weeks ago